NVIDIA’s GPU-Accelerated Computing on the Rise

NVIDIA recently unveiled its unveiled its next-generation Volta GPU (graphics processing unit). In this series, we’ll look at the various growth opportunities NVIDIA is looking at to tap into GPU-accelerated computing.

June 22 2017, Updated 3:08 p.m. ET

NVIDIA’s Investor Day

NVIDIA (NVDA) has been the center of attention among investors and analysts after the company released better-than-expected fiscal 1Q18 earnings. Also, it unveiled its next-generation Volta GPU (graphics processing unit) at the 2017 GPU Technology Conference, and held its Investor Day. The Volta GPU could help NVIDIA stay ahead of Advanced Micro Devices (AMD), which is set to launch the Vega GPU in June 2017.

At its 2017 Investor Day, NVIDIA management did not give any financial targets but talked about future growth opportunities the company is targeting. It is looking to expand the reach of GPU-accelerated computing beyond the data center and into smart cities, industries, automotive, and manufacturing. These markets could increase its total addressable market to around $70 billion by 2020.

NVIDIA’s GPU-accelerated computing

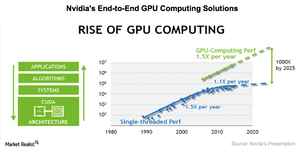

While NVIDIA has been working on GPU-accelerated computing for over a decade, it was just a year ago that the technology industry (QQQ) really shifted its focus to this computing approach. Earlier, the industry relied on general-purpose microprocessors, where Intel (INTC) dominates. However, as Moore’s Law has slowed, tdata centers need alternatives to improve computing performance.

According to Moore’s Law, the number of transistors in a microprocessor doubles every two years, while the size of the chip shrinks, boosting performance and power efficiency. Microprocessors depend largely on transistors for advanced computing.

However, NVIDIA has adopted a different approach. Instead of relying solely on transistors, it has developed an entire system that delivers advanced computing at multiple levels, including processor architecture, system software, algorithms, and applications.

NVIDIA’s platform development process

NVIDIA spends a lot of time and money on developing its Maxwell, Pascal, and Volta architecture. It then designs systems based on the architecture—Tesla for data centers, GeForce for gaming, Quadro for professional visualization, Jetson for embedded devices, and Drive PX for cars.

Once a design is ready, NVIDIA has chips manufactured by TSMC (TSM). The chip designer then programs the chips using CUDA, its parallel computing platform and API (application programming interface). Once the GPU is programmed, NVIDIA’s developers work with customers to develop algorithms to make the GPU domain-specific. For instance, CUDA can program a GPU to follow voice commands, and algorithms can program a GPU to follow commands that have medical or legal terminology. In this series, we’ll look at the various growth opportunities NVIDIA is looking at to tap into GPU-accelerated computing.